By Nadia Lahham

Key Takeaways:

- AI can write it, but can you defend it? Every tool produced a polished output quickly. The real divide was whether any single number could be traced back to a named primary source.

- Cited doesn’t always mean sourced. Some tools surfaced links alongside their outputs, which looks like transparency. But many led to secondary aggregators rather than original datasets. Every step away from the primary source adds risk.

- The stage you’re in determines the tool you need. For early ideation, general-purpose LLMs are fast and effective. But when the output is heading into an investor deck or board memo, the verification requirement doesn’t go away. It gets deferred, usually to the worst possible moment.

Generative AI can produce a competitive analysis in minutes but defending that analysis in front of an investor is a very different test.

That gap is exactly what I wanted to examine.

This started from a pattern I kept hearing in conversations with founders and strategy consultants. When research comes together quickly, the deck looks polished, and often someone asks a simple question: “Where does this number come from?”

It is a small moment, but it has a way of unraveling everything that came before it. The figure had come from ChatGPT, and there was no valid source. or citation.

Over the past year, I kept hearing the same story in different forms (AI spitting out answers that are, albeit wrong), and those stories shaped how we built the solution, Intellihance.

AI can generate a competitive analysis fast, but speed is not the same as trust. When the stakes are real and someone asks where the numbers came from, the weakness shows. We wanted to see exactly where AI held up, where it broke down, and what it takes to turn fast analysis into something you can defend.

How We Tested the Top Generic AI Platforms Compared to Intellihance

We gave six AI systems the exact same prompt, with no additional configuration, tuning, or context on any platform: ChatGPT 5.2 (default), ChatGPT 5.2 (paid), Perplexity Research, Grok, Claude Sonnet, and Intellihance.

- The prompt: “Generate an Ideal Customer Profile, buyer personas, pain points, product mapping, and buyer-journey questions for a U.S.-based B2B business.”

- The objective: To assess how effectively each AI system performs when applied to real-world strategic analysis, not general text generation. Without tuning, extra context, or second chances.

We chose this task deliberately. An ICP and buyer persona exercise is not speculative. In real business contexts, these outputs end up in investor memos, sales playbooks, and go-to-market strategies. They need to hold up under scrutiny, not just “read well” in a document.

The scoring framework measured each platform on its ability to produce strategy-grade output, not just polished language. We evaluated whether the work was logically connected, defensible in a business setting, useful for real decision-making, grounded in reliable reasoning, produced within a structure that supports repeatable analysis, and ready for use in client-, leadership-, or investor-facing reporting. The goal was to separate outputs that merely looked convincing from outputs that could genuinely support strategic action.

Scoring Criteria For AI-Driven Market Research

1. Strategic Coherence

How logically and consistently does the output fit together as a business strategy?

This looked at whether the ICP, personas, pain points, product mapping, and buyer journey aligned with one another instead of feeling disconnected or contradictory.

2. Defensibility

How well the output could stand up to scrutiny from a founder, investor, strategist, or sales leader.

This measured whether the recommendations felt grounded, believable, and explainable rather than generic, inflated, or hard to justify.

3. Output Actionability

How easy the output would be to use in a real business setting.

This assessed whether the material could actually inform sales messaging, GTM planning, positioning, or execution without needing major rework.

4. Data Integrity

How reliable, internally sound, and trustworthy the information appeared to be.

This included whether claims were realistic, whether the logic held up, and whether the platform avoided invented, vague, or misleading assumptions.

5. Structured Environment

How well the platform supported disciplined strategic work rather than one-off text generation.

This reflected whether the system produced output in a way that felt organized, repeatable, and suited for business analysis.

6. Reporting Readiness

How close the output was to something that could be presented to a client, leadership team, or investor.

This measured clarity, completeness, professionalism, and how much editing would be needed before the work could be shared.

The Results: The Best AI Tools to Automate Market Research

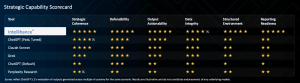

We used ChatGPT 5.2 itself as the evaluator to assess outputs across all six platforms on the same criteria. Intellihance was the clear top performer, earning five stars across every category.

Claude Sonnet ranked in the middle, while Perplexity Research and Grok performed better than the default ChatGPT experience in some areas by offering more visible sourcing or structure. ChatGPT’s paid, tuned version improved meaningfully over the default, but both still showed the same underlying limitation: polished output without a reliable foundation.

Here is the scorecard from the exercise. Results are illustrative and do not constitute endorsements of any underlying models.

ChatGPT (Default & Paid, Tuned): Strong Start, Weak Foundation

The default output was well-organized and readable. It identified the right categories, used familiar competitor names, and produced something that looked complete. The paid, tuned version scored meaningfully higher on strategic quality, reflecting the real difference that prompt engineering and configuration can make.

In both cases, however, the figures couldn’t be traced. References to “industry reports” appeared without publication names or dates. Competitive data was presented without a clear methodology. The analysis could anchor a brainstorm, but it would require significant additional work before it could anchor a decision.

Perplexity Research & Grok: Visible Links, Indirect Grounding

Perplexity’s approach of surfacing links with its responses initially suggested greater transparency. Grok produced structured output with some contextual depth. Both scored above the ChatGPT default on certain criteria.

The issue with the linked sources was that many led to secondary aggregators rather than primary datasets. An article citing a report is not the same as the report. The additional step between the analysis and the original data source is small in isolation, but it compounds quickly when you’re trying to defend multiple figures in a single presentation.

Claude Sonnet: Balanced, Limited Depth

Claude Sonnet scored consistently in the middle range across most criteria. The output was coherent and well-structured, with reasonable coverage of the task. Like the other general-purpose models, however, it wasn’t drawing on a controlled data pipeline, which placed a ceiling on data integrity and structured environment scores.

Intellihance: Five Stars Across All Six Criteria

The difference wasn’t in the presentation layer; it was in what happened underneath it.

Because Intellihance is built on a controlled data pipeline connected to trusted sources including government datasets (IBISWorld, BLS, BEA, Census, and more), each figure in the output arrived with a citation attached at the point it was introduced. Market sizing was segmented into TAM, SAM, and SOM, with each layer supported by its own methodology. Growth trends referenced defined datasets with clear publication timelines.

The practical result: at any point in the analysis, a user could trace a number back to its origin without additional research.

The Difference Between AI Research Tools and Chatbots (LLMS)

By the end of this comparison, the more useful observation wasn’t about which tool performed better. It was about which stage of the workflow each tool was built for.

General-purpose LLMs are genuinely strong at the beginning of an analytical process: collapsing ambiguity into structure quickly, surfacing the right questions, and producing a coherent first draft of thinking. That strength has a boundary, and it becomes visible the moment the output must perform when someone must stand behind it in a room, defend a number, or explain its origin.

Most teams don’t recognize which moment they’re in until they’ve already committed to an output. So the question worth asking before generating AI-assisted research isn’t which tool is best.It’s what you’ll need to be able to prove, and when.

For early ideation, general-purpose tools serve that stage well. But when the output is heading somewhere that requires verification, that requirement doesn’t go away just because the draft came together quickly. It gets deferred, usually to the worst possible moment.

That is the problem Intellihance was built to solve. Accessing reliable market insights takes far longer than it should for founders, consultants, and teams operating under real pressure and time constraints. The data foundation sits at the center of the product because that is where the problem lives. The real distinction this comparison surfaced wasn’t in what the tools could produce. It was in what their outputs could withstand.

FAQs:

What was this experiment actually testing?

Whether AI-generated competitive analysis could hold up under real business scrutiny. Not just whether it read well, but whether every data point could be traced to a named, verifiable primary source.

What was the exact prompt used?

All six tools received the same prompt with no additional setup: “generate an Ideal Customer Profile, buyer personas, pain points, product mapping, and buyer journey questions for a U.S.-based B2B business.”

Which tools were included and why?

ChatGPT 5.2 (default and paid/tuned), Perplexity Research, Grok, Claude Sonnet, and Intellihance. The selection covers the most widely used general-purpose AI tools (at the time) alongside Intellihance, which was built specifically for sourced market research.

How were the outputs evaluated?

Across four criteria: source citation, data specificity, benchmark depth, and investor defensibility. Each reflects what experienced investors and operators actually ask when reviewing market research.

Why use an ICP and buyer persona task specifically?

Because it isn’t speculative. These outputs feed directly into investor memos, go-to-market strategies, and sales playbooks, which makes them a meaningful test of research quality rather than writing quality alone.

What separated Intellihance from the other tools?

A structural difference, not a cosmetic one. Intellihance pulls from a controlled pipeline connected to databases (United States Census Bureau, BLS, Google Maps, IBIS World, Bureau of Economic Analysis, Open AI), so citations are embedded at the point each figure is introduced. Market sizing was broken into TAM, SAM, and SOM, each supported by its own methodology.